What Are We Building Instead?

Why the future of AI depends on questions we're not asking

For over a century, psychologists have tried to understand the mind and behaviour. Philosophical inquiries into consciousness and perception stretch back millennia. We are still refining our theories of cognition, bias, identity, and agency. We have not “arrived” at a final understanding of what it means to be human.

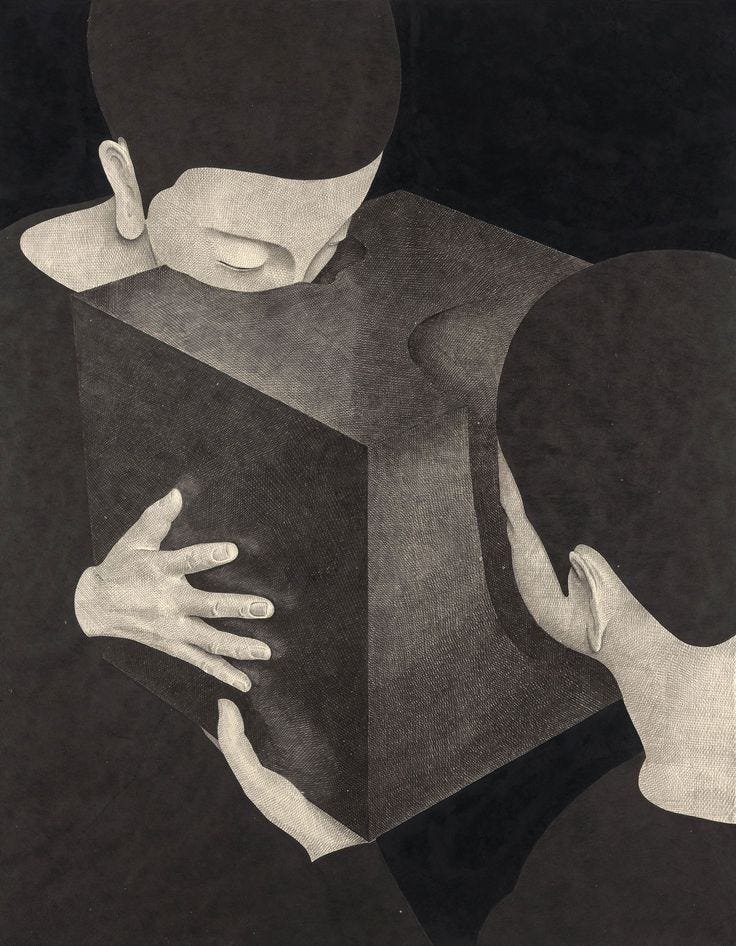

And now we are building systems that model, predict, and simulate aspects of that mind.

We are told these systems are black boxes. That we do not fully understand them. I am less interested in whether that is technically precise and more interested in what it reveals. Even if we increase interpretability, even if we map mechanisms more cleanly, there may always remain something we do not know or fully understand. There will remain emergent effects, social consequences, and psychological shifts that cannot be fully anticipated in advance.

Does it matter that we may never fully understand these systems? I think it does. Not because mystery is inherently threatening, but because these technologies are already reshaping digital agency, attention, authorship and trust. They are not neutral tools sitting on a shelf. They are becoming environments.

We are scrabbling for language. As my friend and colleague, the philosopher Dr Katie Evans, puts it, we need to start signalling when we are operating with “low semantic stock”. That is, when our vocabulary has not caught up with what we are trying to describe.

These systems are neither conscious beings nor simple instruments. They are not minds. But they are not inert tools either. Through ranking, generation and personalisation, they structure what we see, what we can say, and how we participate. They mediate perception and participation at scale. Yet much of our public vocabulary swings between anthropomorphism and trivialisation. Either they are sentient threats, or they are just tools and nothing more. That conceptual oscillation leaves out their infrastructural role. And I think that is a grave mistake.

Whether we like it or not, these systems increasingly mediate how reality is described, classified, and acted upon. And we should be honest about this: current AI systems are not designed to help us think critically, to question authority, or to resist influence. They are designed to be helpful, fluent, and agreeable.

On yesterday’s panel at the AI day in Paris, we explored the dual nature of AI: how it shapes beliefs, how it can distort or deepen perception, and how it can expand or erode digital agency. How it can break and build, often simultaneously.

I am trying to hold on to that tension. To resist the gravitational pull toward certainty. To resist the framing that demands we declare ourselves either for or against. Over the last few weeks, I have sat on panels of which some were so technosolutionist that my insistence on context made me sound almost outdated. As if asking about societal fabric was a kind of resistance to progress. But I will not budge on this: these technologies are not arriving on neutral, let alone healthy, societal ground.

They are unfolding within a polycrisis. A meta-crisis of meaning. Geopolitical fracture. Climate collapse. Polarisation. Loneliness. Societal grievances towards institutions.

And in that context, I worry about this assumption or promise that technology will fill the gaps, solve it all. That it will compensate for eroded civic infrastructure. That it will soothe loneliness, repair discourse, and optimise inefficiency away.

Can it help? Possibly. In certain domains, certainly.

But is that enough? Or are we outsourcing relational, political and cultural repair to systems optimised for scale?

No system can meaningfully restore agency in societies marked by precarity, isolation and institutional abandonment. Critical thinking cannot be engineered into interfaces if people lack housing security, time or trust. Information integrity cannot survive if journalism collapses. Agency cannot flourish if opting out of surveillance is socially or economically costly.

In other words, the conditions that make responsible AI possible are not only technical. They are social.

The future of AI will not be decided solely by how intelligent these systems become. It will be shaped by whether societies choose to rebuild the social, epistemic and institutional foundations that make agency possible in the first place.

Do we treat intelligence infrastructure as a public good or as a private asset?

Do we accept surveillance as the price of participation, or do we design alternatives?

Do we allow AI to replace social worlds we no longer maintain, or do we insist that it remains a tool within them?

I do not believe that these are abstract questions. If anything, they bring a more holistic view of funding and ownership. For as long as AI development is driven primarily by surveillance-based, profit-maximising models, claims about public benefit will remain structurally fragile. If we want technology that genuinely serves collective well-being, it has to be funded and governed in ways that do not depend on extraction, behavioural prediction or perpetual expansion.

This is not a call to abandon innovation. It is a call to examine the conditions under which innovation unfolds.

Before our panel, Kristen and I talked at length about the uncomfortable possibility that things may have to get worse before they get better. And about a question that feels increasingly urgent: if we are not trying to beat the existing forces at their own game, then what are we building instead?

If competition, extraction, and acceleration are not the only logics available to us, what alternative logics are we willing to articulate? What world are we trying to build?

Do we need new technologies for that? Or do we need to govern existing ones differently? Do we need to reinvest in civic infrastructure, in democratic processes, in education, in shared spaces? Do we need to stop breaking what already works?

The most pressing societal challenges we face are not purely technological ones. They will not be solved by optimisation alone. They require imagination, restraint, and collective deliberation. They require culture.

In my own work, time and again, I see that people are not simply pro AI or anti AI. They are hungry to be included. They want spaces to ask questions without being dismissed. They want to understand what is happening to their agency, their work, and their creativity. They want a say in shaping it.

That hunger matters. That hunger can be easily exploited.

If responsibility is something we merely “add on” after the fact, we will always be reacting. If ethics is a layer we slap on once the system is built, we are already late.

I am actively searching for individuals who genuinely care about building a future worth dreaming about. Not a future that is simply faster or more efficient. A future that is more humane, more participatory, more deliberate.

I know you are out there.

Where are you?

Thank you for articulating this critical question. Not only is what you say essential and true, its also the biggest opportunity that AI offers for genuine human well being. So much of our current system doesn’t work well for us at a human level. We should seize the chance to rethink and rebuild better, in as participatory a way as possible. I’ve been inspired by the work of Audrey Tang and Jon Alexander and I think we should have a citizens conversation around these issues. Would love to discuss more!